With RedHat recently releasing RHEL 7 (and CentOS promptly getting their rebuild out the door shortly after), I decided to take the opportunity to start upgrading some of my ageing RHEL/CentOS (EL) systems.

My personal co-location server is a trusty P4 3.0Ghz box running EL 5 for both host and Xen guests. Xen has lost some popularity in favour of HVM solutions like KVM, however it’s still a great hypervisor and can run Linux guests really nicely on even hardware as old as mine that lacks HVM CPU extensions.

Considering that EL 5, 6 and 7 are all still supported by RedHat, I would expect that installing EL 7 as a guest on EL 5 should be easy – and to be fair to RedHat it mostly is, the installation was pretty standard.

Like EL 5 guests, EL 7 guests can be installed entirely from the command line using the standard virt-install command – for example:

$ virt-install --paravirt \

--name MyCentOS7Guest \

--ram 1024 \

--vcpus 1 \

--location http://mirror.centos.org/centos/7/os/x86_64/ \

--file /dev/lv_group/MyCentOS7Guest \

--network bridge=xenbr0

One issue I had is that the installer no longer prompts for network information to use to download the rest of the installer and instead assumes you have a DHCP server, an assumption that isn’t always correct. If you want to force it to use a static address, append the following parameters to the virt-install command.

-x 'ip=192.168.1.20 netmask=255.255.255.0 dns=8.8.8.8 gateway=192.168.1.1'

The installer will proceed and give you an option to either use VNC to get a graphical installer, or to accept the more basic/limited text mode installer. In my case I went with the text mode installer, generally this is fine for average installations, except that it doesn’t give you a lot of control over partitioning.

Installation completed successfully, but I was not able to subsequently boot the new guest, with an error being thrown about pygrub being unable to find the boot partition.

# xm create -c vmguest

Using config file "./vmguest".

Traceback (most recent call last):

File "/usr/bin/pygrub", line 774, in ?

raise RuntimeError, "Unable to find partition containing kernel"

RuntimeError: Unable to find partition containing kernel

No handlers could be found for logger "xend"

Error: Boot loader didn't return any data!

Usage: xm create <ConfigFile> [options] [vars]

Xen works a little differently than VMWare/KVM/VirtualBox in that it doesn’t try to emulate hardware unnecessarily in paravirtualised mode, so there’s no BIOS. Instead Xen ships with a tool called pygrub, that is essentially an application that implements grub and goes through the process of reading the guest’s /boot filesystem, displaying a grub interface using the config in /boot, then when a kernel is selected grabs the kernel and associated information and launches the guest with it.

Generally this works well, certainly you can boot any of your EL 5 guests with it as well as other Linux distributions with Xen paravirtulised compatible kernels (it’s merged into upstream these days).

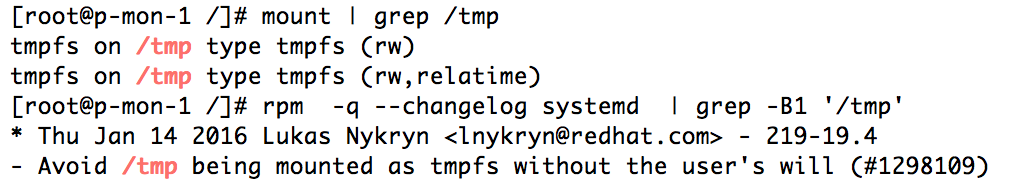

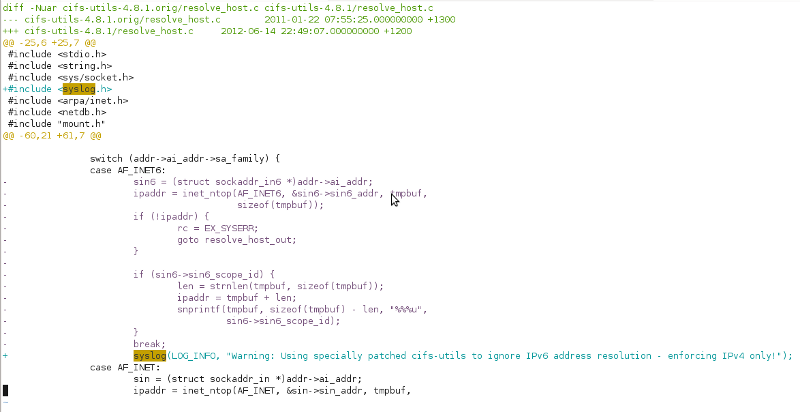

However RHEL has moved on a bit since 2007 adding a few new tricks, such as replacing Grub with Grub2 and moving from the typical ext3 boot partition to an xfs boot partition. These changes confuse the much older utilities written for Xen, leaving it unable to read the boot loader data and launch the guest.

The two main problems come down to:

- EL 5 can’t read the xfs boot partition created by default by EL 7 hosts. Even if you install optional xfs packages provided by centosplus/centosextras, you still can’t read the filesystem due to the version of xfs being too new for it to comprehend.

- The version of pygrub shipped with EL 5 doesn’t have support for Grub2. Well, technically it’s supposed to according to RedHat, but I suspect they forgot to merge in fixes needed to make EL 7 boot.

I hope that RedHat fix this deficiency soon, presumably there will be RedHat customers wanting to do exactly what I’m doing who will apply some pressure for a fix, however until then if you want to get your shiny new EL 7 guests installed, I have a bunch of workarounds for those whom are not faint of heart.

For these instructions, I’m assuming that your guest is installed to /dev/lv_group/vmguest, however these instructions should work equally for image files or block devices.

Firstly, we need to check what the state of the /boot partition is – we need to make sure it is an ext3 volume, or convert it if not. If you installed via the limited text mode installer, it will be an xfs partition, however if you installed via VNC, you might be able to change the type to ext3 and avoid the next few steps entirely.

We use kpartx -a and -d respectively to expose the partitions inside the block device so we can manipulate the contents. We then use the good ol’ file command to check what type of filesystem is on the first partition (which is presumably boot).

# kpartx -a /dev/lv_group/vmguest

# file -sL /dev/mapper/vmguestp1

/dev/mapper/vmguestp1: SGI XFS filesystem data (blksz 4096, inosz 256, v2 dirs)

# kpartx -d /dev/lv_group/vmguest

Being xfs, we’re probably unable to do much – if we install xfsprogs (from centos extras), we can verify it’s unreadable by the host OS:

# yum install xfsprogs

# xfs_check /dev/mapper/vmguestp1

bad sb version # 0xb4b4 in ag 0

bad sb version # 0xb4a4 in ag 1

bad sb version # 0xb4a4 in ag 2

bad sb version # 0xb4a4 in ag 3

WARNING: this may be a newer XFS filesystem.

#

Technically you could fix this by upgrading the kernel, but EL 5’s kernel is a weird monster that includes all manor of patches for Xen that were never included into upstream, so it’s not a simple (or even feasible) operation.

We can convert the filesystem from xfs to ext3 by using another newer Linux system. First we need to export the boot volume into an image file:

# dd if=/dev/mapper/vmguestp1 | bzip2 > /tmp/boot.img.bz2

Then copy the file to another host, where we will unpack it and recreate the image file with ext3 and the same contents.

$ bunzip2 boot.img.bz2

$ mkdir tmp1 tmp2

$ sudo mount -t xfs -o loop boot.img tmp1/

$ sudo cp -avr tmp1/* tmp2/

$ sudo umount tmp1/

$ mkfs.ext3 boot.img

$ sudo mount -t ext3 -o loop boot.img tmp1/

$ sudo cp -avr tmp2/* tmp1/

$ sudo umount tmp1

$ rm -rf tmp1 tmp2

$ mv boot.img boot-new.img

$ bzip2 boot-new.img

Copy the new file (boot-new.img) back to the Xen host server and replace the guest’s/boot volume with it.

# kpartx -a /dev/lv_group/vmguest

# bzcat boot-new.img.bz2 > /dev/mapper/vmguestp1

# kpartx -d /dev/lv_group/vmguest

Having fixed the filesystem, Xen’s pygrub will be able to read it, however your guest still won’t boot. :-( On the plus side, it throws a more useful error showing that it could access the filesystem, but couldn’t parse some data inside it.

# xm create -c vmguest

Using config file "./vmguest".

Using <class 'grub.GrubConf.Grub2ConfigFile'> to parse /grub2/grub.cfg

Traceback (most recent call last):

File "/usr/bin/pygrub", line 758, in ?

chosencfg = run_grub(file, entry, fs)

File "/usr/bin/pygrub", line 581, in run_grub

g = Grub(file, fs)

File "/usr/bin/pygrub", line 223, in __init__

self.read_config(file, fs)

File "/usr/bin/pygrub", line 443, in read_config

self.cf.parse(buf)

File "/usr/lib64/python2.4/site-packages/grub/GrubConf.py", line 430, in parse

setattr(self, self.commands[com], arg.strip())

File "/usr/lib64/python2.4/site-packages/grub/GrubConf.py", line 233, in _set_default

self._default = int(val)

ValueError: invalid literal for int(): ${next_entry}

No handlers could be found for logger "xend"

Error: Boot loader didn't return any data!

At a glance, it looks like pygrub can’t handle the special variables/functions used in the EL 7 grub configuration file, however even if you remove them and simplify the configuration down to the core basics, it will still blow up.

# xm create -c vmguest

Using config file "./vmguest".

Using <class 'grub.GrubConf.Grub2ConfigFile'> to parse /grub2/grub.cfg

WARNING:root:Unknown image directive load_video

WARNING:root:Unknown image directive if

WARNING:root:Unknown image directive else

WARNING:root:Unknown image directive fi

WARNING:root:Unknown image directive linux16

WARNING:root:Unknown image directive initrd16

WARNING:root:Unknown image directive load_video

WARNING:root:Unknown image directive if

WARNING:root:Unknown image directive else

WARNING:root:Unknown image directive fi

WARNING:root:Unknown image directive linux16

WARNING:root:Unknown image directive initrd16

WARNING:root:Unknown directive source

WARNING:root:Unknown directive elif

WARNING:root:Unknown directive source

Traceback (most recent call last):

File "/usr/bin/pygrub", line 758, in ?

chosencfg = run_grub(file, entry, fs)

File "/usr/bin/pygrub", line 604, in run_grub

grubcfg["kernel"] = img.kernel[1]

TypeError: unsubscriptable object

No handlers could be found for logger "xend"

Error: Boot loader didn't return any data!

Usage: xm create <ConfigFile> [options] [vars]

Create a domain based on <ConfigFile>

At this point it’s pretty clear that pygrub won’t be able to parse the configuration file, so you’re left with two options:

- Copy the kernel and initrd file from the guest to somewhere on the host and set Xen to boot directly using those host-located files. However then kernel updating the guest is a pain.

- Backport a working pygrub to the old Xen host and use that to boot the guest. This requires no changes to the Grub2 configuration and means your guest will seamlessly handle kernel updates.

Because option 2 is harder and more painful, I naturally chose to go down that path, backporting the latest upstream Xen pygrub source code to EL 5. It’s not quite vanilla, I had to make some tweaks to rip out a couple newer features that were breaking it on EL 5, so I’ve packaged up my version of pygrub and made it available in both source and binary formats.

Download Jethro’s pygrub backport here

Installing this *will* replace the version installed by the Xen package – this means an update to the package on the host will undo these changes – I thought about installing it to another path or making an RPM, but my hope is that Red Hat get their Xen package fixed and make this whole blog post redundant in the first place so I haven’t invested that level of effort.

Copy to your server and unpack with:

# tar -xkzvf xen-pygrub-6f96a67-JCbackport.tar.gz

# cd xen-pygrub-6f96a67-JCbackport

Then you can build the source into a python module and install with:

# yum install xen-devel gcc python-devel

# python setup.py build

running build

running build_py

creating build

creating build/lib.linux-x86_64-2.4

creating build/lib.linux-x86_64-2.4/grub

copying src/GrubConf.py -> build/lib.linux-x86_64-2.4/grub

copying src/LiloConf.py -> build/lib.linux-x86_64-2.4/grub

copying src/ExtLinuxConf.py -> build/lib.linux-x86_64-2.4/grub

copying src/__init__.py -> build/lib.linux-x86_64-2.4/grub

running build_ext

building 'fsimage' extension

creating build/temp.linux-x86_64-2.4

creating build/temp.linux-x86_64-2.4/src

creating build/temp.linux-x86_64-2.4/src/fsimage

gcc -pthread -fno-strict-aliasing -DNDEBUG -O2 -g -pipe -Wall -Wp,-D_FORTIFY_SOURCE=2 -fexceptions -fstack-protector --param=ssp-buffer-size=4 -m64 -mtune=generic -D_GNU_SOURCE -fPIC -fPIC -I../../tools/libfsimage/common/ -I/usr/include/python2.4 -c src/fsimage/fsimage.c -o build/temp.linux-x86_64-2.4/src/fsimage/fsimage.o -fno-strict-aliasing -Werror

gcc -pthread -shared build/temp.linux-x86_64-2.4/src/fsimage/fsimage.o -L../../tools/libfsimage/common/ -lfsimage -o build/lib.linux-x86_64-2.4/fsimage.so

running build_scripts

creating build/scripts-2.4

copying and adjusting src/pygrub -> build/scripts-2.4

changing mode of build/scripts-2.4/pygrub from 644 to 755

# python setup.py install

Naturally I recommend reviewing the source code and making sure it’s legit (you do trust random blogs right?) but if you can’t get it to build/lack build tools/like gambling, I’ve included pre-built binaries in the archive and you can just do

# python setup.py install

Then do a quick check to make sure pygrub throws it’s help message, rather than any nasty errors indicating something went wrong.

# /usr/bin/pygrub

We’re almost ready to try booting again! First create a directory that the new pygrub expects:

# mkdir /var/run/xend/boot/

Then launch the machine creation – this time, it should actually boot and run through the usual systemd startup process. If you installed with /boot set to ext3 via the installer, everything should just work and you’ll be up and running!

If you had to do the xfs to ext3 conversion trick, the bootup process will explode with scary errors like the following:

.......

[ TIME ] Timed out waiting for device dev-disk-by\x2duuid-245...95b2c23.device.

[DEPEND] Dependency failed for /boot.

[DEPEND] Dependency failed for Local File Systems.

[DEPEND] Dependency failed for Relabel all filesystems, if necessary.

[DEPEND] Dependency failed for Mark the need to relabel after reboot.

[ 101.134423] systemd-journald[414]: Received request to flush runtime journal from PID 1

[ 101.658465] type=1305 audit(1405735466.679:4): audit_pid=476 old=0 auid=4294967295 ses=4294967295 subj=system_u:system_r:auditd_t:s0 res=1

Welcome to emergency mode! After logging in, type "journalctl -xb" to view

system logs, "systemctl reboot" to reboot, "systemctl default" to try again

to boot into default mode.

Give root password for maintenance

(or type Control-D to continue):

The issue is that the conversion of the filesystem changed it’s UUID, plus the filesystem type in /etc/fstab no longer matches.

We can fix this easily by dropping to the recovery shell by entering the root password above and executing the following commands:

guest# sed -i -e '/boot/ s/UUID=[0-9\-]*/\/dev\/xvda1/' /etc/fstab

guest# sed -i -e '/boot/ s/xfs/ext3/' /etc/fstab

guest# cat /etc/fstab | grep '/boot'

Make sure the cat returns a valid /boot line, it should be using /dev/xvda1 as the device and ext3 as the filesystem now.

Finally, stop and start the instance (reboots seem to hang for me):

guest# shutdown -h now

xm create -c vmguest1

It should now boot correctly! Go forth and enjoy your new VM!

CentOS Linux 7 (Core)

Kernel 3.10.0-123.el7.x86_64 on an x86_64

This is certainly a hack – doing this backport of pygrub solved my personal issue, but it’s entirely possible it may break other things, so do your own testing and determine whether it’s suitable for you and your environment or not.