Following on from yesterday’s ELB post, it’s worth noting that there’s another common scenario where you can trigger issues when accessing ELBs – many corporates enforce the use of an HTTP proxy for all outgoing traffic, sometimes transparently, other times less so.

Having a multi-AZ ELB and accessing from your own data center isn’t too much of an issue, if each host does it’s own DNS lookup, your hosts should roughly end up with a 50/50 split across AZs, as each one resolves it’s own DNS record.

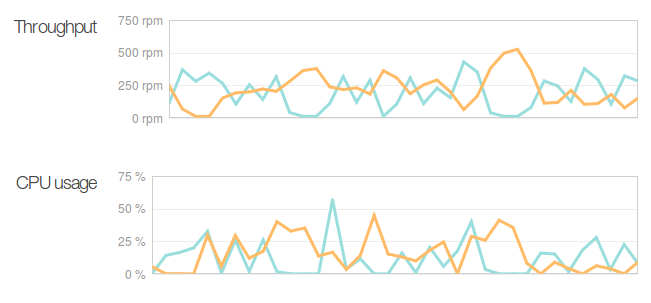

But when a proxy is added to the mix, it breaks this, since proxies tend to do their own DNS lookups and cache the results for use by other clients. Testing with Squid showed that the DNS caching for Squid would favour a particular AZ and send all traffic to that one AZ, before then flipping to the other AZ when the DNS cache expired and was refreshed – in my case, every 5mins when the TTL expired.

If you’re using Squid, there’s little you can do to work around this – whilst you can adjust the Squid DNS caching times and approaches, short of disabling DNS caching and taking the performance hit of a DNS lookup for every new outbound request, you will always end up with load jumping between both AZs and causing havoc..

There’s a few workaround options:

- Have multiple Squid proxies for your outbound traffic and load balance between then on your network, if you load balance outgoing traffic across 4+ different outbound Squid servers, your load should end up going to different AZs a *bit* more evenly – but still not guaranteed.

- Create an internal ELB and access via your VPC link, allowing you to bypass your company’s outbound network proxies (as traffic routes via VPN or Direct Connect) – but then you’re paying for 2x ELBs – one external for end users and one internal for your own systems.

- Replace the ELB with something actually useful (eg a Varnish or HA-Proxy instance in Amazon).

- Get rid of the outbound proxies please! I could write a business case for it based on the amount of money I’ve seen proxies waste at so many different companies (hint: engineers time debugging issues is much more expensive than a couple GB extra data usage).

- Gin.

“(hint: engineers time debugging issues is much more expensive than a couple GB extra data usage).”

People actually still use proxies for caching content?

I haven’t actually ever worked or heard of many places using it as a forward cache, most use it to filter content, and not cache content.

I’ve seen them used for both, or in a non-filtering setup for “audit” purposes – of course the companies doing this then forget to retain the log files, so their audit value is effectively useless.

Proxies have their place, but they should be transparent and not on the DMZ server networks.